Harmful Stuff

For tech-weary Midwest farmers, 40-year-old tractors now a hot commodity (2020/01/13)

The other big draw of the older tractors is their lack of complex technology. Farmers prefer to fix what they can on the spot, or take it to their mechanic and not have to spend tens of thousands of dollars.

“The newer machines, any time something breaks, you’ve got to have a computer to fix it,” Stock said.

Read more here.

bloomberg.com (2019/05/07)

Read more here.

Couldn’t sign you in (2018/11/13)

Read more here

Microsoft acquires go get (2018/06/04)

Read more here.

Young Woman or Old Woman (2018/06/04)

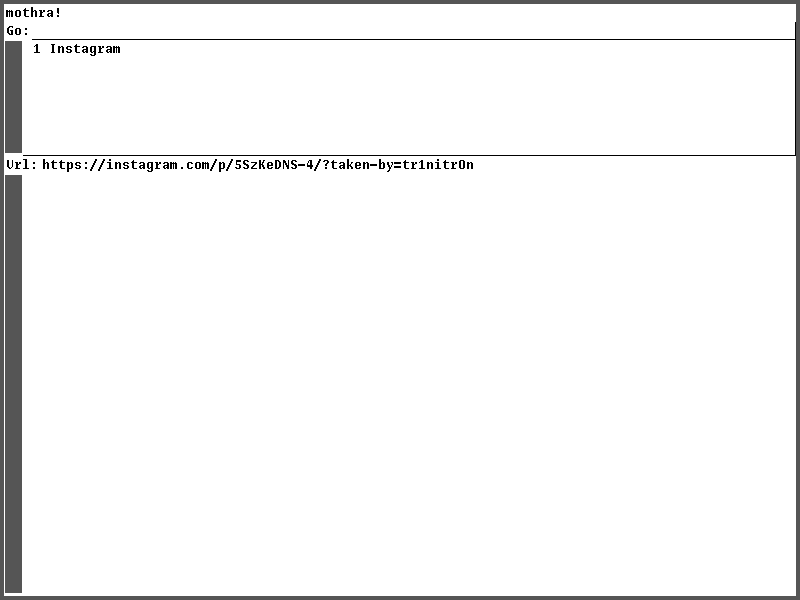

How Instagram’s algorithm works:

Read more here.

The UNIX Philosophy (2018/05/31)

short story in one image (2018/05/16)

Total Meltdown? (2018/03/27)

Meet the Windows 7 Meltdown patch from January. It stopped Meltdown but opened up a vulnerability way worse … It allowed any process to read the complete memory contents at gigabytes per second, oh - it was possible to write to arbitrary memory as well.

Read more here.

Don’t get ripped off on an SXGA+ screen for your X61/X62 (2018/02/15)

ATTENTION THINKPAD MODDERS

xiphmont reports:

Most ‘new’ HV121P01-100 SXGA+ screens for sale on ebay, AliExpress, etc, are neither genuine nor new.

Read more here.

YOU WILL NOT UNDERSTAND THIS (2017/11/16)

Your esteemed editor jettisons dead weight:

This is my exit interview from social media. Some of these “services” I abandoned years ago. Others I am still struggling to avoid. From experience, I assume that you, reader, will make it impossible for me to remain ignorant of developments WRT: all of this garbage. What all of this garbage has in common is that it has all lately proven to be surplus to requirements, and soon I will never voluntarily use any of it ever again.

Read the entire article here.

Update:

HN disagrees.

webshit weekly (2016/12/08)

An annotated digest of the top “Hacker” “News” posts:

8cc.vin: Pure Vim script C Compiler (2016/10/20)

This is a Vim script port of 8cc built on ELVM. In other words, this is a complete C compiler written in Vim script.

8cc is a nicely-written small C compiler for x86_64 Linux. It’s C11-aware and self-hosted. ELVM is a Eso Lang Virtual Machine. ELVM customizes 8cc to emit its own intermediate representation, EIR as frontend. ELVM compiles C code into EIR via the frontend. And then translates EIR into various targets (Python, Ruby, C, BrainFxxk, Piet, Befunge, Emacs Lisp, …) in backend. The architecture resembles LLVM. This presentation is a good stuff to know ELVM architecture further (though in Japanese).

ELVM can compile itself into various targets. So I added a new ‘Vim script’ backend and use it to translate C code of 8cc into Vim script.

Now 8cc.vim is written in pure Vim script. 8cc.vim consists of frontend (customized 8cc) and backend (ELC). It can compile C code into Vim script. And of course Vim can evaluate the generated Vim script code.

Note that this is a toy project. 8cc.vim is much much slower. It takes 824 (frontend: 430 + backend: 396) seconds to compile the simplest putchar() program on MacBook Pro Early 2015 (2.7 GHz Intel Core i5). But actually it works!

As VM runs on Vim script, 8cc.vim works on Linux, OS X and (hopefully) Windows.

Read more here.

Related (2016/10/18)

How it feels to learn JavaScript in 2016 (2016/10/18)

You know what. I think we are done here. Actually, I think I’m done. I’m done with the web, I’m done with JavaScript altogether.

Read the full article here.

hello, world (2016/09/12)

if you are confident no hackers are within 100 feet (2016/07/02)

Douglas Crockford (2016/06/25)

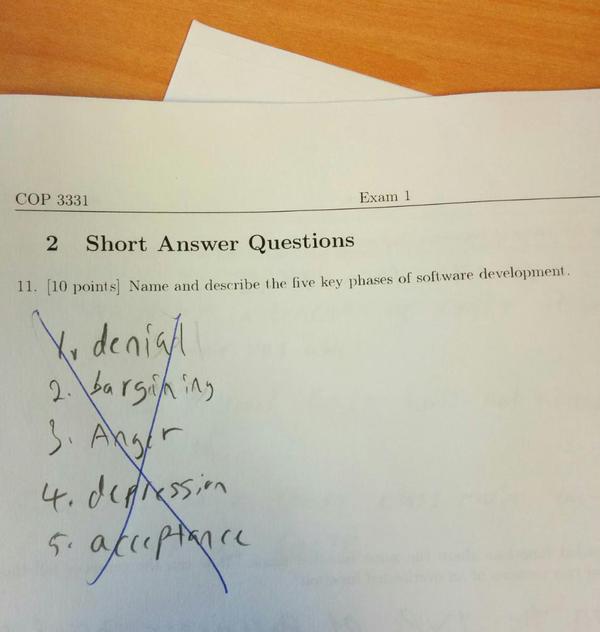

Five states of software development (2016/06/22)

emailreg.com (2015/10/29)

OpenBSD (2015/10/20)

CVSROOT: /cvs

Module name: src

Changes by: deraadt@cvs.openbsd.org 2015/10/17 18:04:43

Modified files:

sys/kern : syscalls.master kern_pledge.c uipc_syscalls.c

sys/sys : pledge.h proc.h socketvar.h

sys/netinet : in_pcb.c

sys/netinet6 : in6_pcb.c

Log message:

Add two new system calls: fbsocket() and fbconnect(). This creates a

SS_FACEBOOK tagged socket which has limited functionality (for example,

you cannot accept on them...) The libc farmville will switch to using

these, therefore pledge can identify a facebook transaction better.

ok tedu guenther kettenis beck and others

Google (2015/10/19)

What’s harmful about Google?

Anonymous 10/19/15 (Mon) 18:01:02 No. 416982

416422

liking any corporation ever

thinking a structure where key people are constantly replaced and everyone has to fake doing good work to not get fired / replaced has any stable identity

but other than that Google has been pretty reliable and fair to their users.

okay, now fuck off.

Google is just another corporation. The problem is lots of le geeks fap about Google because it’s Super Innovative etc. The only useful thing Google ever did was make a search engine that wasn’t buggy and filled with annoying ads as the other ones. That was the in the early 2000s.

But now all the search engines are pretty much the same. Also Bing* actually has a feature to turn off safe search. Google slowly fazed out that option and replaced it with some bullshit that turns off safe search for your search if you add"porn" or some trigger word to your search. Also Google used to like actually show pages that contain the words you search. Now it just"knows what you mean" and pulls up some completely irrelevant garbage almost every fucking time.

Another innovation brought to you by Google is the prevelance of this Recaptcha trash on every fucking page of the internet. Pretty much half the time the captcha will be 3 numbers. The rest of the time it’s either one of:

o Picture captcha - select all 4 of the images with street signs, but there are only actually 3 images with street signs here. Please repeat the same scenario 3-15 more times so we can see that you aren’t a robot. Similar scenarios with burgers and ice cream. Also note that this captcha only appears when the I’m not a robot button failed (I’ve never seen it not fail… even without tor and on Windows). The"I’m not a robot" button is essentially DRM. You must have a conforming browser so they can see that the series of input/output events looks statistically like a human. The problem here is conforming browser simply means Firefox, Chrome, or IE or one of the other big ones. You cannot possibly make a browser from scratch and have it pass this button test. If you can, it means the button test is trivial to defeat. But I assume that’s not the case, and it relies on the behavior of millions of lines of code in Firefox/Chrome/etc.

o Nazi word captcha - Usually full of rnmrnrnmrnmrnmrnmr uvuvuvuvuvuwuwvuwwvu etc. They succeed 99% of the time at building a completely ambiguous string which your answer depends on which order you read the characters in, whether you look ahead, the amount of time you spend re-reading the captcha, the mood you’re in, etc. You are almost guaranteed to fail this captcha unless you retry it like 15 times or it’s on fuzzy mode and it just accepts a string that’s similar to the captcha shown (for example ul.to is on fuzzy mode for some reason, I have no idea how this is activated or if google just decides ul.to will have this mode activated for some reason).

o They just block you depending on which tor exit node you use. There’s nothing more ironic than blocking people from using your captcha.

Worse yet, Google seems to be the ones who started the fad of requiring a cell phone to sign up to a fucking website. And they brought that cancer to youtube. Fuck that shit right off. I still have a gmail account I registered before they switched to requiring cell phone to sign up. It’s still annoying as fuck to use because they are constantly threatening that they’re going to give someone my account because they added secret question/cellphone/etc backdoor #35782357823 to my account or the XSS of the day (yes, Google routinely has XSS vulns too, even trivial ones, despite that they are super leet haxors. WTF do you expect? It’s a corporation and software companies are not responsible for security errors - they can just appeal to"Security is Hard" every time).

416570

The open source community is utter shit regardless.

- I’m not shilling Bing. Bing has a shitty UI for image search where you have to wait for it to make remote requests before showing the window that has the image and link to the page. This combiend with all the animation bullshit inthe UI is incredibly annoying on my under $1000 computer over tor. Also they used to use JS/popup to implement the"view page containing this image" button, which was guaranteed to be blocked as a popup, but they finally replaced that with a normal link after years.

Read more in the mostly lame 8ch thread.

instagram (2015/07/21)

Crashing iPad App Grounds Dozens of American Airline Flights (2015/05/01)

Insanely great:

American Airlines was forced to delay multiple flights on Tuesday night after the iPad app used by pilots crashed. Introduced in 2013, the cockpit iPads are used as an “electronic flight bag,” replacing 16kg (35lb) of paper manuals which pilots are typically required to carry on flights. In some cases, the flights had to return to the gate to access Wi-Fi to fix the issue.

Read more here.

Boeing advises periodically rebooting 787 (2015/05/01)

The US Federal Aviation Administration (FAA) has issued a new airworthiness directive PDF for Boeing’s 787 because a software bug shuts down the plane’s electricity generators every 248 days.

“We have been advised by Boeing of an issue identified during laboratory testing,” the directive says. That issue sees “The software counter internal to the generator control units (GCUs) will overflow after 248 days of continuous power, causing that GCU to go into failsafe mode.”

Read more here.

Everyone has JavaScript, right? (2015/04/24)

From kryogenix.org:

- Your user requests your web app

- Has the page loaded yet?

- “All your users are non-JS while they're downloading your JS” — Jake Archibald

- Did the HTTP request for the JavaScript succeed?

- If they're on a train and their net connection goes away before your JavaScript loads, then there's no JavaScript.

- Did the HTTP request for the JavaScript complete?

- How many times have you had a mobile browser hang forever loading a page and then load it instantly when you refresh?

- Does the corporate firewall block JavaScript?

- Because loads of them still do.

- Does their ISP or mobile operator interfere with downloaded JavaScript?

- Sky accidentally block jQuery, Comcast insert ads into your script, and if you've never experienced this before, drive to an airport and use their wifi.

- Have they switched off JavaScript?

- People still do.

- Do they have addons or plugins installed which inject script or alter the DOM in ways you didn't anticipate?

- There are thousands of browser extensions. Are you sure none interfere with your JS?

- Is the CDN up?

- CDNs are good at staying up (that’s what being a CDN is) but a minute downtime a month will still hit users who browse in that minute.

- Does their browser support the JavaScript you’ve written?

- Check Can I Use for browser usage figures.

Is all the above true?

Then yes, your JavaScript works.

Probably.

Progressive enhancement. Because sometimes your JavaScript just won’t work.

Be prepared.

fizzbuzz (2015/04/09)

Old, but it’s still what it is.

Google won’t allow the co-inventor of Unix and the C language to check-in code, because he won’t take the mandatory language test.

Read more here.

Barely Metal (2015/03/31)

Running on bare metal is being made possible in large part by virtualisation technologies such as Xen which provide standard virtual networking and file system interfaces. These virtualised interfaces mean that bare metal solutions don’t need hardware device driver support, making the core concept much easier to implement.

Read more at Not Constructive blog.

Google Summer of Code 2015 (2015/03/02)

content, pt. 3 (2015/02/24)

; hget http://www.xojane.com/ | htmlfmt

; hget http://www.xojane.com/ | wc

170 470 6699

;

HTTP/2.0 — The IETF is Phoning It In (2015/01/07)

Some will expect a major update to the world’s most popular protocol to be a technical masterpiece and textbook example for future students of protocol design. Some will expect that a protocol designed during the Snowden revelations will improve their privacy. Others will more cynically suspect the opposite. There may be a general assumption of “faster.” Many will probably also assume it is “greener.” And some of us are jaded enough to see the “2.0” and mutter “Uh-oh, Second Systems Syndrome.”

The cheat sheet answers are: no, no, probably not, maybe, no and yes.

If that sounds underwhelming, it’s because it is.

Read more at acmqueue.

To Wash It All Away (2014/11/16)

James Mickens' classic rant (Usenix ;login, March 2014, p.2-8):

Describing why the Web is horrible is like describing why it’s horrible to drown in an ocean composed of pufferfish that are pregnant with Tiny Freddy Kruegers

Download the pdf

this is why software sucks (2014/07/23)

Ted Unangst’s thoughts on recent develepmonts in the LibreSSL world:

It’s been a week and change since the first LibreSSL portable release was announced to much sturm und drang. I’m not directly involved, but a few thoughts and reflections on the release and its reception. (Deliberately missing some links; do your own digging if you care.)

Most people seemed pretty happy with the release. That was good. There were a few portability wrinkles. They got fixed. That was good.

Then there were the LibreSSL is an unsafe catastrophe fun times. My earlier thoughts.

Now what was missing was any mention of prior art. Like CVE-2013-1900. Is PostgreSQL unsafe? Or CVE-2014-0017. Is stunnel unsafe? These are real programs, presumably trying to be secure, with real exploits. Not carefully crafted samples that aided in their own exploitation. Perhaps it’s OpenSSL that’s unsafe? It’s not like OpenSSL hasn’t had its own fixes for trouble with pid reuse.

I don’t have much involvement in portable, but I definitely had a hand in neutering the RAND and egd interfaces. Contrary to some commentary, we didn’t neuter these interfaces because we didn’t know what they were. We neutered them because we know precisely what they are. They’re fucking stupid.

The next turn of events was the notion that the getpid/atfork fixes were then rushed out the door. It’s sad when “Bug fixed in timely manner” is headline worthy news. It’s twisted when it’s spun as something bad. What else should one do after receiving a bug report via blog post via front page news? Sit on it? What purpose would that serve? Or, phrased differently, what narrative does a delayed fix facilitate?

Finally came the report that the atfork fix was all wrong, which was then edited to be rather less wrong. Hey, that’s ok, we all make mistakes. I’m more disappointed with the chorus of tweeters and sharers and likers who rushed to spread the bad news without apparently reading or understanding it, simply because it slotted nicely into the “wrong wrong wrong” story they had going.

Not to say all criticism is unwarranted or unwelcome. Indeed, with every release, Bob specifically asks for criticism, though I think he calls it feedback.

Whether for serious or for fun, phk and djb have each conjectured a massive all encompassing conspiracy dedicated to maintaining the status quo and preventing the development of secure software. I’m not sure I believe in such a conspiracy, but I am certain that should it exist, it could not possibly hope to be more disruptive. On the one hand we have people claiming the OpenSSL API is too broken to work with (they may have a point); on the other hand we have people demanding that we maintain every last misfeature piece of shit function in that API.

Do you want software to stop being shit? Then stop expecting… nay, stop demanding that software be shit.